Platform Overview

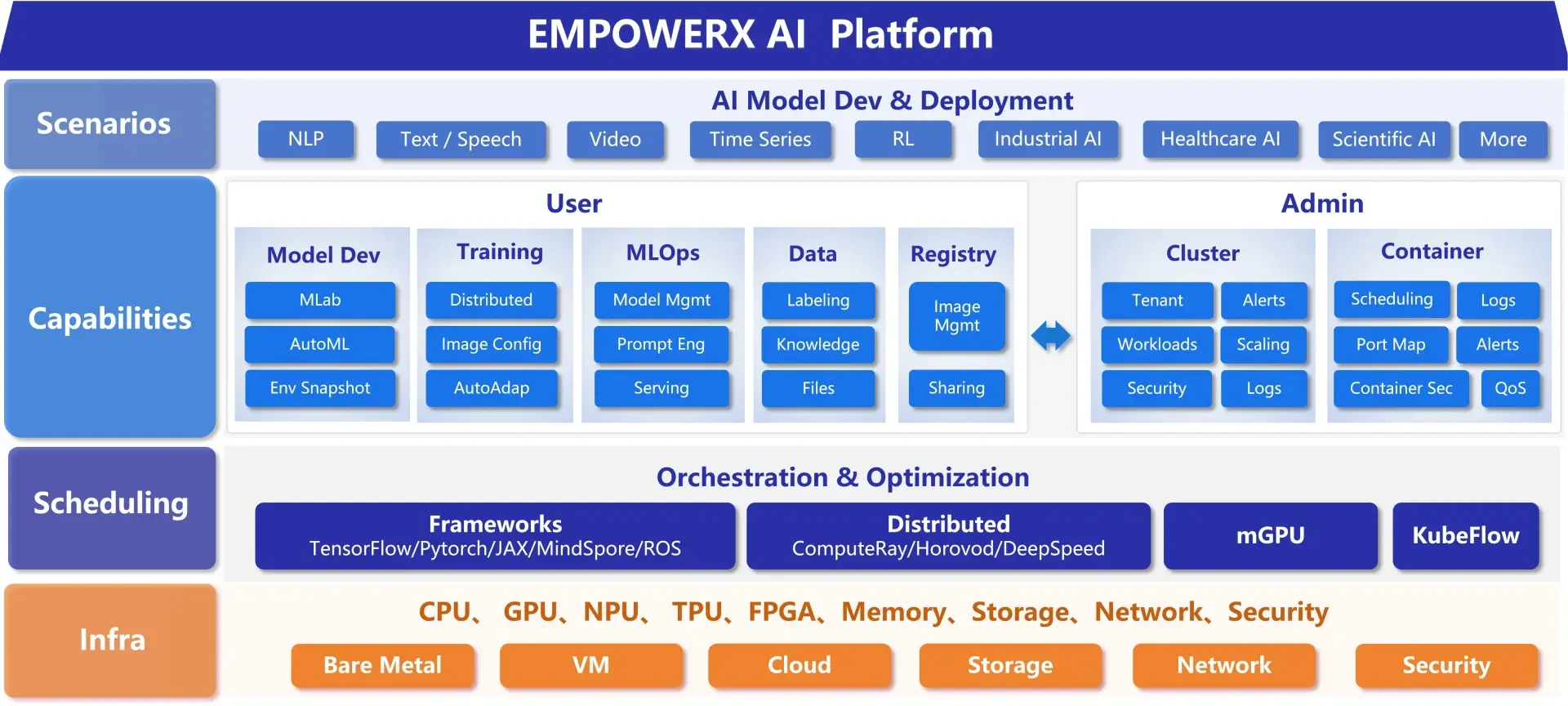

EMPOWERX AI Management Platform is built on a PaaS + AI architecture, integrating multiple AI and machine learning frameworks to efficiently orchestrate GPU and heterogeneous computing resources.

The platform provides end-to-end support for AI model development, training, deployment, and operations. It covers the entire lifecycle of large language models (LLMs)—from resource and asset management, model training and fine-tuning, prompt engineering, to model deployment and inference services.

By enabling development, collaboration, training, and inference in a dedicated, secure, and compliant environment, EMPOWERX helps organizations build private, scenario-specific large models and AI applications with full control over data and infrastructure.

Core Capabilities

Model Development

Provides the MLab interactive notebook for online development. Supports resource monitoring, snapshot backup and recovery, web access, and remote terminal connections for efficient model experimentation.

Model Training

Supports job submission, version control, and distributed training. Includes automatic resource reclamation, priority scheduling, queuing mechanisms, and automated hyperparameter tuning to improve training efficiency.

Model Deployment

Supports both cloud and edge deployment. Enables customized inference scripts and environments, with full lifecycle management of online inference services.

Data Management

Provides dataset management and data labeling capabilities. Supports data storage, sharing, multi-version model data management, and one-click model publishing.

Image Management

Includes pre-built images and supports user-defined images.Offers full lifecycle management, including image upload, update, tagging, compatibility adaptation, and deletion.

Platform Advantages

Open & Neutral

An open and vendor-neutral AI platform supporting multiple open-source deep learning frameworks, without locking users into any specific technology stack or service ecosystem.

Cost Efficient

Advanced resource scheduling improves utilization and reduces operational overhead.

Flexible billing models help lower initial investment and long-term infrastructure costs.

Ready to Use

Pre-integrated with mainstream AI frameworks such as TensorFlow, PyTorch, and MXNet.

MLab enables rapid experimentation with one-click access to code and datasets.

Flexible & Elastic

Training environments and tasks can be created on demand.Flexible resource allocation and integrated MLOps workflows support the full AI lifecycle from development to deployment.

High Performance

Built on GPU-based infrastructure and high-density clusters.Delivers high concurrency, high throughput, and low latency, with up to 50× performance gains in scientific computing workloads.

Secure & Isolated

Built-in isolation mechanisms and network monitoring ensure data protection, workload separation, and secure platform operations.

Industry Use Cases

University IC Computing Resource Optimization

By deploying the EMPOWERX AI platform, a leading university’s integrated circuit school unified the scheduling of new and legacy servers, enabling dynamic scaling and on-demand allocation.The integration of heterogeneous computing resources reduced costs and improved utilization, while the visual AI development environment significantly enhanced student experimentation efficiency and supported AI–EDA integrated education.

Reinforcement Learning Research Institute

Using distributed computing and automated scheduling, the platform enabled efficient execution of large-scale parallel training tasks.

Heterogeneous resource management and multi-cluster monitoring optimized resource allocation in real time, while interactive development tools lowered barriers for researchers to quickly conduct experiments.

AI + Music Innovation in Higher Education

Through unified resource management and scheduling, multiple clusters and heterogeneous accelerators were integrated into a single platform.

The one-stop AI development workflow simplified music data processing and model training across the entire pipeline.

Automotive Intelligent Transformation

The platform integrated multi-cluster computing resources through distributed computing and heterogeneous resource management.

Job-based training accelerated model development, while model management and online inference services streamlined deployment and reduced engineering complexity—significantly improving R&D efficiency.