Hardware Acceleration Cluster Solution for an Integrated Circuits Research Institute

AI-assisted integrated circuit (IC) placement and routing has emerged as a high-potential research direction in advanced semiconductor design. By integrating artificial intelligence algorithms with EDA methodologies, this approach aims to improve layout quality, routing efficiency, convergence performance, and overall design productivity through intelligent optimization techniques.

As device complexity increases and process nodes continue to scale, AI-driven EDA workflows demand significantly higher computational resources than traditional rule-based or manual design approaches. To support this shift, a dedicated hardware acceleration cluster was deployed to enable large-scale AI-assisted placement and routing research.

Project Background:

Placement and routing tasks in modern IC design are becoming increasingly complex due to:

- Rapid growth in circuit scale and transistor density

- Shortened product development cycles

- Stricter requirements on power, performance, and signal integrity

- Advanced process technologies down to 5nm and below

Compared to conventional methodologies, AI-assisted placement and routing requires:

- Large-scale data processing

- Graph-based feature extraction

- Model training and iterative optimization

- High-speed SPICE simulation validation

These workloads demand substantial computing power, memory capacity, storage throughput, and GPU-based parallel acceleration. To address these requirements, a scalable heterogeneous hardware acceleration cluster was designed to support AI-EDA tool development and simulation tasks.

Core requirements:

High-Performance Computing

AI-assisted placement and routing involves compute-intensive processes such as model training, circuit data preprocessing, and optimization algorithms. Multi-core CPUs combined with GPU acceleration provide the necessary performance and parallel efficiency.

Large Memory Capacity

Massive circuit datasets, model parameters, and intermediate results must be processed in memory. Sufficient RAM capacity ensures stable execution and minimizes data-swapping bottlenecks.

High-Speed Storage

Simulation outputs, intermediate datasets, and model checkpoints generate significant storage demands. Enterprise-grade SSDs and high-performance storage arrays ensure fast data access and reliability.

Parallel Acceleration

GPU-based parallel computing significantly improves model training speed and SPICE simulation efficiency. Heterogeneous CPU-GPU architecture is essential to achieve scalable performance gains.

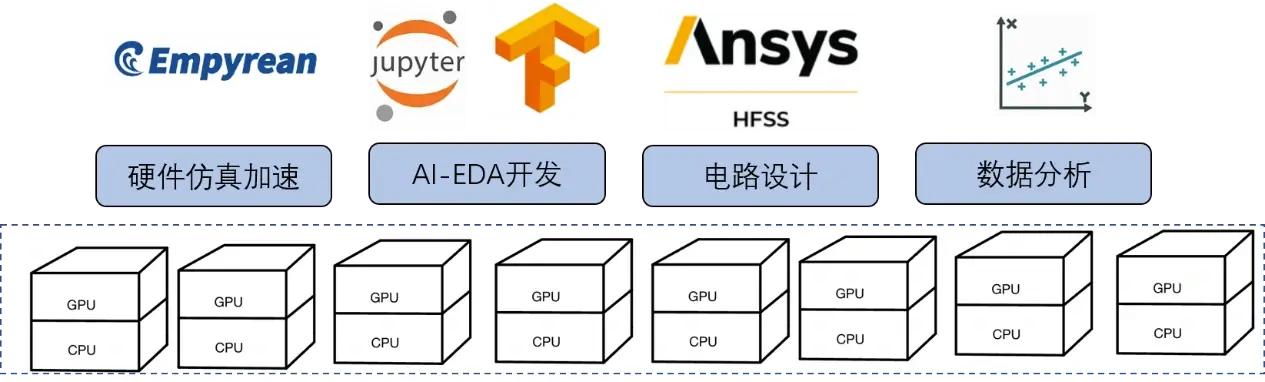

Solution Architecture

Structural diagram:

Hardware-Accelerated Cluster Node (Single Node Configuration):

- Integrated with 4 × NVIDIA A100 40GB PCIe GPUs

- Designed for high-density AI training and SPICE simulation workloads

- Optimized for CPU-GPU heterogeneous acceleration

Each GPU delivers high double-precision and mixed-precision floating-point performance suitable for AI-EDA and circuit simulation tasks.

FP64 | 9.7 TFLOPS |

FP64 Tensor Core | 19.5 TFLOPS |

FP32 | 19.5 TFLOPS |

Tensor Float 32 (TF32) | 156 TFLOPS | 312 TFLOPS* |

BFLOAT16 Tensor Core | 312 TFLOPS | 624 TFLOPS* |

FP16 Tensor Core | 312 TFLOPS | 624 TFLOPS* |

INT8 Tensor Core | 624 TFLOPS | 1248 TFLOPS* |

Empyrean ALPS-GT Heterogeneous Accelerator Platform:

The system integrates eight Nvidia Nvlink HGX-A100 GPU computing cards.

- Integrated with 8 × NVIDIA HGX A100 GPUs via NVLink interconnect

- High-bandwidth GPU-to-GPU communication architecture

- Designed for large-scale distributed AI model training and high-capacity SPICE simulation

- This configuration enables enhanced scalability and supports advanced research workloads requiring high parallel efficiency.

FP64 | 9.7 TFLOPS |

FP64 Tensor Core | 19.5 TFLOPS |

FP32 | 19.5 TFLOPS |

Tensor Float 32 (TF32) | 156 TFLOPS | 312 TFLOPS* |

BFLOAT16 Tensor Core | 312 TFLOPS | 624 TFLOPS* |

FP16 Tensor Core | 312 TFLOPS | 624 TFLOPS* |

INT8 Tensor Core | 624 TFLOPS | 1248 TFLOPS* |

Features of the Heterogeneous Acceleration Solution:

SPICE Circuit Simulation

- Simulation capacity exceeding 100 million devices

- Proprietary CPU/GPU parallel simulation technology

- Up to 10× acceleration compared to CPU-based commercial parallel SPICE simulators

- Mixed-signal simulation support

- Co-simulation with industry-leading digital simulators

- Strong convergence and performance for power management circuits

- Comprehensive static and dynamic circuit checking

- Save/Recover breakpoint resume functionality

- Support for advanced 5nm process nodes

SMS-GT Matrix Solver

- GPU-native matrix solving architecture

- Fully leverages GPU computational resources

- Lossless numerical precision

- More than 10× acceleration compared to CPU-based parallel matrix solvers

This solver significantly enhances simulation throughput for large-scale analog and mixed-signal designs.

Measurement example | CPU-Tools (Hours) | Hardware-accelerated cluster simulation (hours) | acceleration ratio |

|---|---|---|---|

| Transmitter | 157.4 | 18.1 | 8.7X |

| Serdes_vco | 135.1 | 9.8 | 13.8X |

| ADC | 174.1 | 16.3 | 10.7X |

| Serdes_TX | 2752.8 | 12.0 | 22.9X |

Conclusion:

AI-assisted placement and routing represents a strategic advancement in modern IC design research. As circuit complexity and AI model scale continue to increase, scalable heterogeneous acceleration infrastructure becomes a critical enabler.

Through the deployment of a high-performance hardware acceleration cluster, the research institute achieved a substantial upgrade in computational capability, enabling the transition toward next-generation AI-driven intelligent layout planning systems. This enhancement supports improved design quality, faster iteration cycles, and more efficient development workflows.

EmpowerX provides integrated hardware acceleration solutions tailored for AI-EDA and high-performance semiconductor research environments, enabling institutions and enterprises to build scalable, future-ready computing infrastructure.