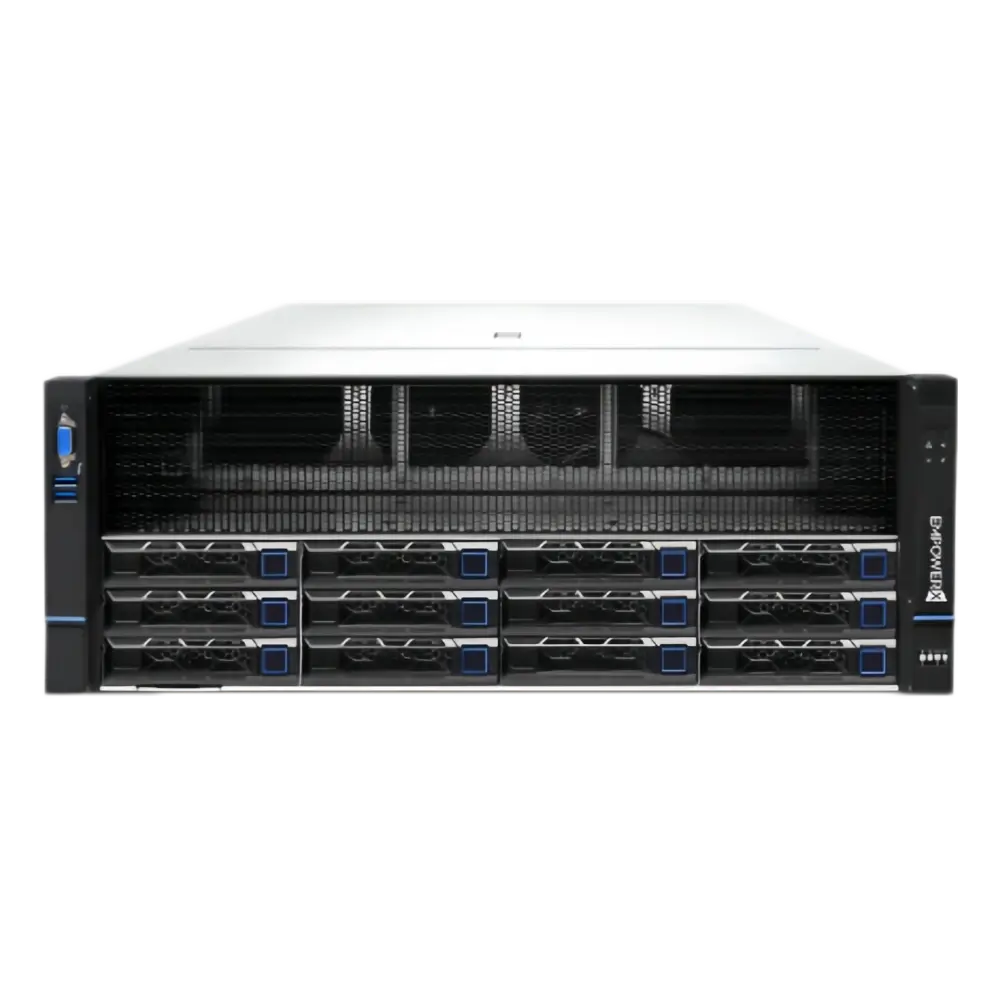

EMPOWERX LLM Integrated Appliance

Built on our self-developed open AI platform, the EMPOWERX Large Language Model Integrated Appliance delivers a ready-to-use, high-performance solution for enterprise generative AI.

The system offers multiple hardware configurations to meet different enterprise requirements, ensuring performance, stability, and scalability.DeepSeek large models can be pre-installed on demand, including 32B / 70B / 671B full-series models, eliminating complex deployment processes.

With fast delivery, plug-and-play deployment, high performance, and secure operation, the appliance enables organizations to quickly deploy private large models in a controlled environment.

Flagship Edition:Extreme Compute Power · Built for the Future (671B)

Product Model

EG4812-G4 Full-Scale LLM Inference Appliance (671B)

Configuration

CPU: 2 × 4th/5th Gen Intel® Xeon® processors

Memory: ≥ 1 TB

GPU: 8 × NVIDIA GPUs

Supported Models

DeepSeek full-scale models

Typical Scenarios

Ultra-large-scale AI research, AGI exploration, cloud-based inference services

Key Advantages

Only two systems are required to deploy the full-capability DeepSeek model.

Self-developed distributed inference technology supports high-concurrency workloads with stable performance.

Professional Edition:Balanced Performance · Enterprise-Grade Inference (70B)

Product Model

EG4804-G4 High-Performance LLM Inference Appliance

Configuration

CPU: 2 × 4th/5th Gen Intel® Xeon® processors

Memory: 256 GB – 1 TB DDR5

GPU: 4 × NVIDIA GPUs

Supported Models

DeepSeek-R1-Distill-Llama-70B and smaller models

Typical Scenarios

Complex reasoning tasks, large-scale knowledge-based Q&A, enterprise AI applications

Key Advantages

Flexible GPU scalability with higher compute density.

Optimizes data center space utilization while reducing overall TCO.

Essential Edition:Reliable Performance · Flexible and Efficient (32B)

Product Model

EG4412-G4 LLM Inference Appliance

Configuration

CPU: 2 × 4th/5th Gen Intel® Xeon® processors

Memory: 64 GB – 256 GB DDR5

GPU: 2 × NVIDIA GPUs

Supported Models

DeepSeek-R1-Distill-Llama-32B and smaller models

Typical Scenarios

On-premises conversational AI, text and code generation, multi-task inference

Key Advantages

Wide applicability with low latency and high efficiency.

A cost-effective solution for enterprises seeking practical generative AI deployment.