All-Flash Server Solution for Real-Time Financial Analytics

As AI technologies such as AIGC and large-scale models continue to evolve, the financial investment and research sector is entering a new phase of intelligent transformation.

Traditional analytics platforms increasingly struggle with:

- High-frequency trading workloads

- Massive volumes of unstructured data

- Real-time forecasting and decision support

- Strategy backtesting at scale

Bottlenecks in compute density, storage throughput, and system responsiveness can directly impact model efficiency and trading performance. In scenarios involving multi-source heterogeneous data ingestion, real-time market prediction, and large-scale strategy simulation, infrastructure scalability and I/O performance become mission-critical.

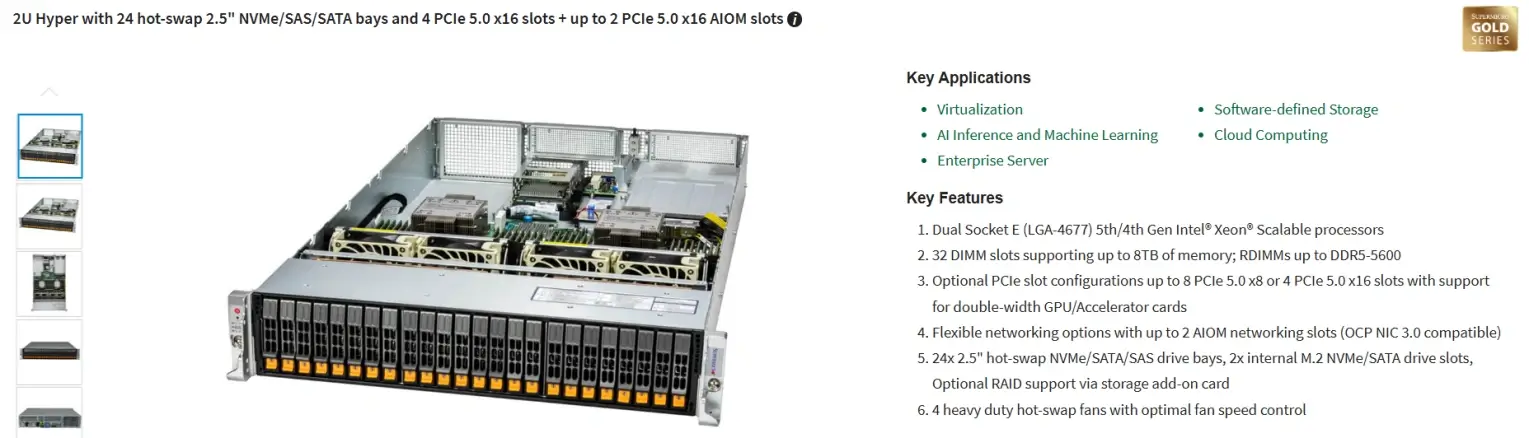

To address these challenges, a high-performance 2U all-flash AI server platform was deployed for a financial institution. The solution integrates the GRAID SupremeRAID™ SR-1010 storage acceleration engine, significantly enhancing storage bandwidth and concurrent response capabilities to support data orchestration, model training, and quantitative strategy simulation workloads.

Why Financial AI Workloads Are Storage-Constrained

In AI-driven financial environments, storage performance is often as critical as compute power. Three primary bottlenecks are commonly observed:

1. Rapid Growth of Unstructured Data

Financial text corpora, chart datasets, trading logs, and multi-modal data streams continue to expand.

Data preprocessing and model training pipelines increasingly depend on high-throughput storage. Traditional architectures struggle to keep pace with sustained read/write demand.

2. Multi-Model Deployment and High-Concurrency Access

Quantitative research platforms frequently run multiple strategies and AI models in parallel.

Frequent access to parameter files, intermediate results, and historical datasets places heavy stress on I/O schedulers. Low-latency and high-concurrency storage access is essential to maintain workflow efficiency.

3. Strategy Backtesting and Market Simulation

High-frequency backtesting and microsecond-level response requirements demand:

- Large-scale dataset retrieval

- High sequential and random read performance

- Stable throughput under sustained concurrency

Storage subsystems must provide not only sufficient capacity, but also consistent high-bandwidth performance to support near real-time simulation and predictive modeling.

Solution Architecture: High Performance × Scalability × Reliability

High-Performance All-Flash Platform

The solution is built on a PCIe 5.0 high-speed architecture and fully populated with:

- 24 × 30TB enterprise NVMe SSDs

- Total raw capacity of 720TB

- Designed to scale toward PB-level data workloads

This architecture provides high-throughput processing capabilities for data-intensive financial research environments, including:

- Large-scale data ingestion

- High-speed query workloads

- Model training pipelines

- Real-time inference preparation

The platform also allows future expansion toward AI inference nodes, distributed scheduling modules, and localized large-model deployment.

GRAID SupremeRAID™ SR-1010: Unlocking NVMe Performance

The integration of the GRAID SupremeRAID™ SR-1010 software-defined RAID accelerator enables substantial performance improvements:

Extreme Performance

- Up to 28 million IOPS

- Up to 260GB/s throughput

- Eliminates traditional hardware RAID bottlenecks

This significantly enhances efficiency for data-intensive AI and quantitative workloads.

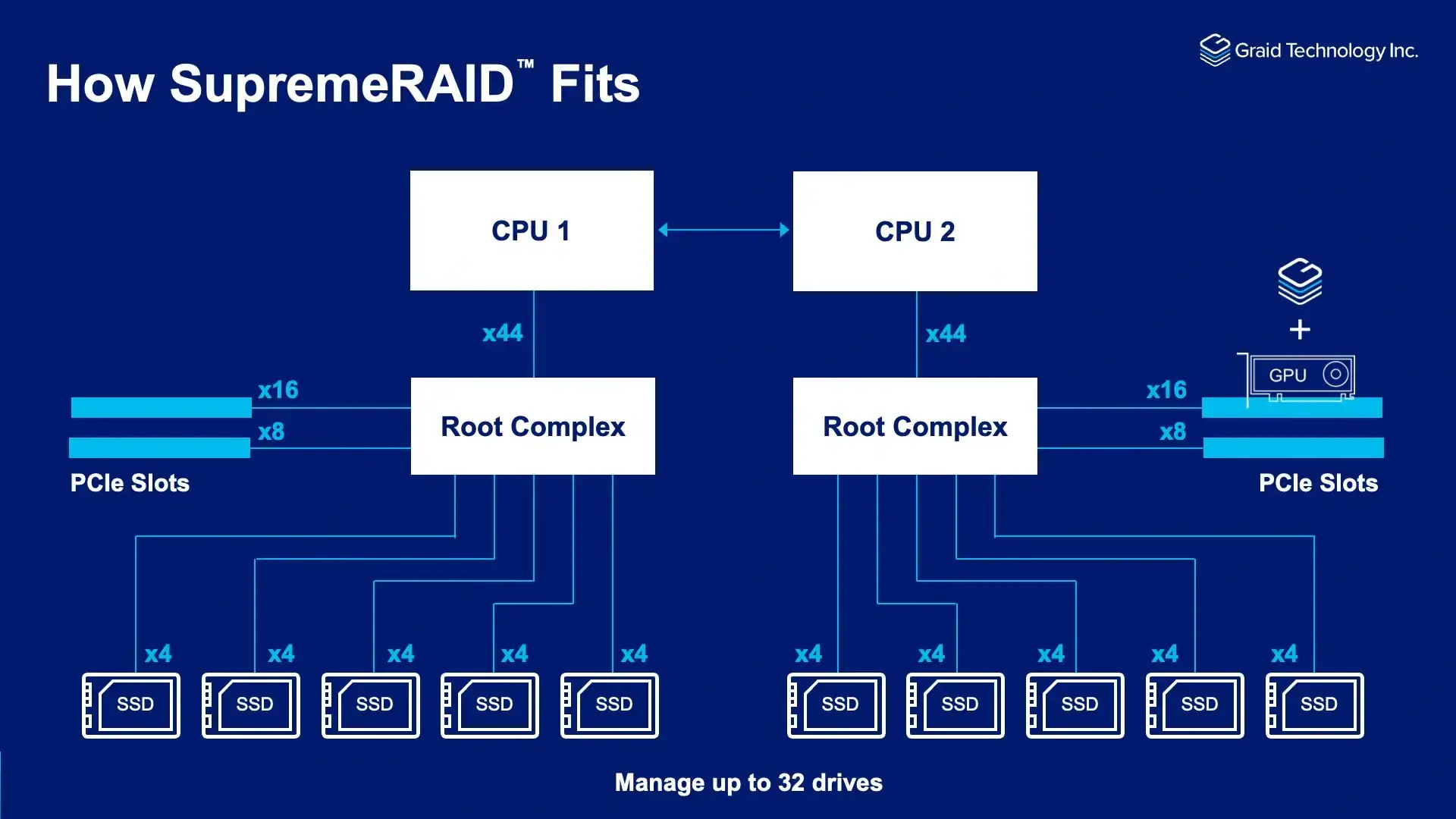

GPU-Accelerated RAID Architecture

RAID computation is offloaded from the CPU to GPU architecture, which:

- Frees CPU cores for critical analytics tasks

- Improves concurrency handling

- Enhances overall system responsiveness

Native NVMe & NVMe-oF Support

- Supports up to 32 local NVMe SSDs

- Fully utilizes PCIe Gen 3/4/5 bandwidth

- Enables near-linear storage performance scaling

High Availability & Maintainability

- Battery-free RAID design eliminates battery degradation risk

- Improved long-term reliability

- Reduced maintenance overhead

Cross-Platform Compatibility

- Supports Linux and Windows environments

- Flexible integration into enterprise financial infrastructures

In performance validation tests, the platform demonstrated stable high-throughput read/write capability under concurrent workloads, ensuring continuous data supply for AI model training and real-time inference.

Building the AI Infrastructure Foundation for Financial Intelligence

As financial institutions transition toward AI-driven investment research and decision-making, infrastructure must evolve from traditional storage architectures to high-performance, scalable, and intelligent platforms.

We focus on delivering integrated AI infrastructure solutions that combine:

- AI servers

- High-performance computing platforms

- Private model deployment environments

- High-throughput storage clusters

Through vertically integrated hardware and software optimization, the goal is not only to provide compute capacity, but to enable deployable, production-ready AI capabilities.

By shortening the path from infrastructure deployment to analytical value realization, organizations can accelerate innovation cycles and enhance competitiveness in increasingly data-driven financial markets.

Compute drives intelligence. Storage sustains performance.

A resilient all-flash foundation is essential for real-time financial analytics at scale.